@rosie4loop

Regarding the artistic spacestations, the goal was to include all the compounds the players designed which we then forwarded to the analysis. The spaceships are indeed only well scoring due to a glitch, but we did include them in the analysis, along with the rest of the initial Round 5 compounds. We didn't want to lose the effort of people who didn't run across the scoring glitch by throwing out all of Round 5. The spaceships and other bad Round 5 compounds were likely all promptly thrown out due to molecular weight filters and other property consideration, so they probably don't actually clutter the analysis. (I haven't checked, but I doubt they're in the 1700 compound listing.) We also didn't directly use the Foldit scores in the compound selection, so there's no bias due to the outrageous Foldit scores.

But that's a good caveat about the compound listing. The compounds in the supplemental are just a dump of everything Foldit players generated. We're not claiming that any of the compounds are actually good, or that the Foldit round score is necessarily correlated with quality. Hopefully that comes across from the extensive post-process which we did to actually figure out which compounds to test.

@rmoretti said: "(In fact, the BI scientists doing the compound selection didn't use Foldit scores to select molecules. The Foldit scores were important to guide which molecules where generated by the player, but once the molecules were generated we used other evaluation metrics to actually select which ones were tested.)" What were the other metrics used?

@rmoretti Thanks for the explanation. I understand the reason of keeping all compounds. If I get it correctly, the role of a Foldit score here is to provide a guide for the generation of ideas, but not for quantitative analysis.

My only concern here is that the (glitched) Foldit score in the compound list would make people question on the validity of the Drugit algorithm, raising concerns on using it e.g. for educational purpose. (As I'm trying to convince others to use the game-version of Foldit for teaching.)

For people with some experience in computer-aided drug design we know that relying on the score generated by a single software is not the best idea, hence the common approaches of filtering, rescoring/pose refinement with other methods like redocking, MD or comparative prediction with multiple docking programs. These kind of post-processing techniques have also been applied this time as mentioned in the manuscript.

But for students or researchers of other fields, it's just too easy to assess the quality of a software/compound based on a number. Hopefully in near future the Drugit algorithm is good enough to predict the (relative) quality of designs!

Thanks for your answers rmoretti and rosie4loops

Just another suggestion:

From a player's perspective, it would be usefull if the library compounds suggested were filtered out of "bad" elements. It often happens that a library proposes 65 molecules with many "bad groups" or any other bad element.

For future design puzzles like this, I wonder if it would make sense to err on the conservative side regarding the conditions that must be met to avoid a score penalty, while also implementing additional bonuses for functional groups that are more likely to actually bind (but otherwise wouldn't score well under normal circumstances)?

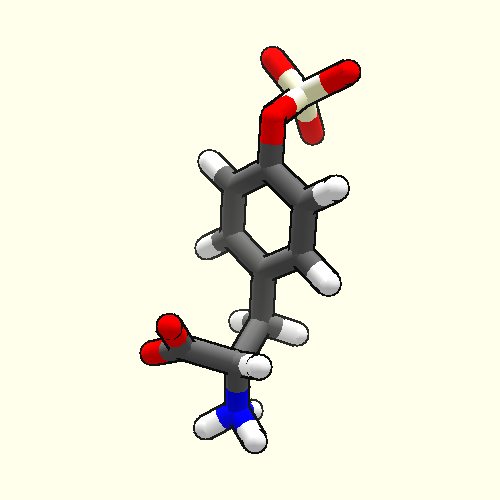

One detail that I noticed from the list of synthesized compounds in Table S2 is that they tend to stay well below the limits specified in Table S1 in terms of TPSA and number of rotatable bonds, while a few actually include halogens or pyrazoline rings. In that regard, my Round 10 solution ( https://foldit.fandom.com/wiki/Puzzle_2091?file=Irc_92665_1642032535_S1_infjamc_28815.png ) seemed like another case of "abusing the score function" in retrospect… in the sense that it was designed to squeeze out as many points as possible by staying just below the limits that would incur a score penalty.

(For this specific example, my strategy essentially boiled down to using the maximum number of amide / amine groups allowed by the TPSA requirement in order to keep clogP low enough, while tacking on as many methyl groups as possible that wouldn't cause excessive clashing or exceed the limits for ligand weight or rotatable bonds. Perhaps a limit of <7 for rotatable bonds or number of amides might have prevented this?)

I also noticed that some of the additional filters applied in the selection of compounds are not included in the game objectves. I understand if its for increasing chemical diversity of designs, to have a shorter list of objectives or if its decided only after the end of the puzzle series. However I believe it'd be good to also list them somewhere, e.g. in a fourm post, so players who want to have their compounds experimentally validated can have a better guide about what they should create.

Comparing the list in the supplementary information of the preprint and in the current game (not sure about VHL), these additional filters include the following

- no. of rings < 5,

- ALogP < 6

- no. of H-bond acceptors 1 < 7

- no. of H-bond donors 1 < 5

- quantitative estimation of drug-likeness (QED4) > 0.3

- Removing compounds with the following substructures

- fused benzenes

- sequential alkenes

- no. of alkyne > 1

- an alkyne and nitrile in the same molecule

- aliphatic ethers in long chains

- no. of amides > 3

-

aliphatic chain with at least 5 carbons should be filtered by bad groups (?)

- no. of methyl groups < 3 on heteroaromatic rings

- and other unwanted features

(Not sure if I've missed any of that, only quickly checked some of them in puzzle 2307. Not putting cLogP, MW, no. of atoms etc in the list since the limit may vary in different round of puzzle.)

Other metrics like "Efflux and permeability predictions", redocking and ABFE are probably impossible to be calculated in the game, but introducing what are they and the reasons why using these metrics would also beneficial for players, educators and learners.

In https://fold.it/forum/posts/76487 above, rmoretti said:

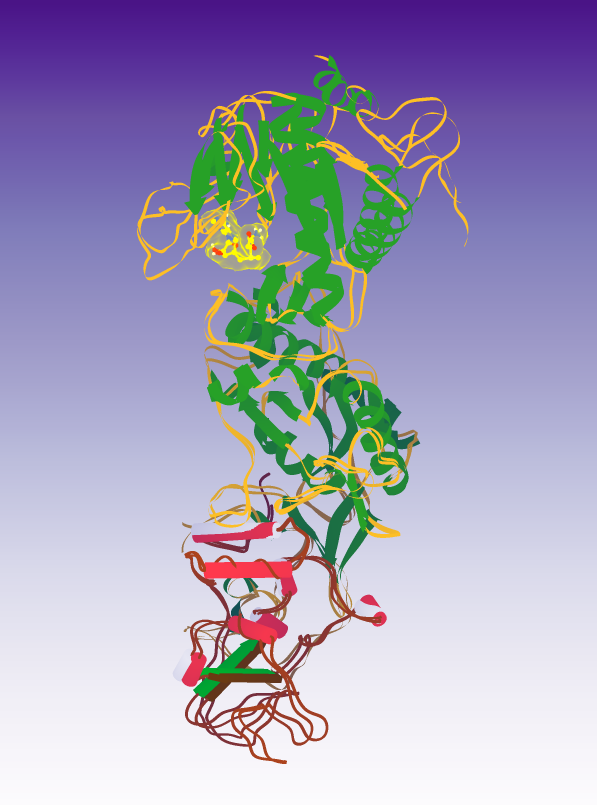

If you play online, the client periodically sends the structure you're working on (or rather your best structure in the past X minutes) to the server. For the VHL puzzles, we pooled all the structures people sent to the server: top scoring for the round, share-with-scientist and the automatic "in progress" uploads.

What happens if you have 5 Foldit clients running on one computer and 2 Foldit clients running on another computer? Does each of the 7 clients send its own best structure from the past X minutes to the server? Does the server save all 7 of these structures, or does the server only save the best-scoring 1 of 7 structures? Also, say one client is sending structures every 5 minutes and its best score from 5:00-5:05pm was 8200, its best score from 5:05-5:10pm was 8700, its best score from 5:10-5:15pm was 8300, & its best score from 5:15-5:20pm was 8800. Would the structures sent to the server have the scores 8200, 8700, 8300, & 8800, or would they instead have the scores 8200, 8700, 8700, & 8800? Do all clients continue to send scores to the server in the same way after a puzzle ends? Also, when a Foldit Staff member goes to analyze the results (which could be several days after a puzzle ends), do they download only results sent to the server up until the puzzle expired, or do they download all the results sent to the server so far?

I ask all these questions because I often wonder on the last day of a puzzle if I should quit all my clients early and share all their key solutions with scientists before the puzzle ends. Would it work just as well (or even better) if I instead let all my clients keep running until after the puzzle ends and then quit each client at my leisure later on, sharing the latest key results from each client with scientists then. I'd imagine that many players from around the world are not available right before a puzzle ends to quit all their clients and share all their key results then. Should they quit their clients early to make sure all their results get sent to the server?

@jeff101 The structures from the individual clients should all be accumulated by the server. The "best in the past X minutes" is handled client side (to avoid excessive network traffic). I believe that it's the best score since the last time the structure was sent, rather than the best score since you began the puzzle. And this happens automatically while the puzzle is being played and doesn't require exiting the puzzle of quitting the client.

The sending of the structures does continue even after the puzzle is over. However, we typically pull the results off the server once. While that would include structures submitted between the puzzle expiration and when we collect the structures, any structures contributed after the download point won't be counted in the analysis. (And that's assuming that the analysis includes all the structures – sometimes we focus just on the best-per-player structures, which don't get updated after the puzzle closes.)

I should note that the "Share with Scientist" feature bypasses the periodic annotation mechanism, so if there's a particular structure you think we should take a look at, sharing with scientists is the way to go. – But if you share after we download the results, those shares won't be included (as shares).

I was thinking about how each Foldit client automatically sends a solution to the Foldit server every X minutes. What would a client send while running a recipe like Ligand docker? Ligand docker does many cycles. Each cycle starts with the best-scoring solution from the run so far. Then Ligand docker adds some bands to the solution and starts wiggling it. Usually the score goes down during this stage. Then Ligand docker removes the bands and lets the solution wiggle where it wants to. Often this raises the score but usually not enough to beat the score the cycle started with. If the new score beats the best score in the run so far, it becomes the new best score in the run so far. Then the next cycle starts from this new best score.

What the Foldit server sees will depend on how long each cycle in Ligand docker (let's call it T minutes) is compared to X minutes. If X is much larger than T (often written X » T), the server will probably see a series of scores rising smoothly and slowly. If X is much smaller than T (often written X « T), the server will see a wider range of scores that fluctuate while on average they slowly rise. The series of solutions and scores for X « T will be more interesting than the series for X » T because the X « T series will sample more different conformations and peaks & valleys in the protein's energy landscape.

The above all assumes that the client sends the server the best score from the past X minutes. What if the client instead just sent the present score every X minutes? I think the series of scores received by the server would then be more like the X « T series above, fluctuating widely vs time while rising slowly on average, and sampling many different conformations and peaks & valleys in the protein's energy landscape. This would happen no matter what T is. This makes me think that having each Foldit client just send its present solution and score to the server every X minutes would be more useful than having each Foldit client send to the server its best score over the past X minutes.

I'm guessing at this moment due to the limit in the scoring function, the ligand identity is more important than the modelled pose, and the modelled pose is a lot more important than the Foldit score.

I GUESS this way because after getting the designs from players there's likely some post-processing to reevaluate the ligands and refine the binding pose, while the player's modelled binding pose would be used for comparison but not the only factor determining the choice of compounds for experimental validation.

Until the Foldit score for ligand design is more meaningful quantitatively, e.g. having a finer and more optimal torsional scoring instead of the 1000-700-…-negative, the conformational energy landscape would be rather inaccurate.

Also, "bad groups", "TPSA", "cLogP" etc aren't directly related to energy, instead they're serving as a guide for ligand design. In theory there can be a molecule having high penalties from these filters, but being a perfect binder.

(Which are the reasons I disable the filters as I tested on using Foldit to sample unbinding pathway, can't see the barriers at bottleneck if I enabled torsional filters. But the torsions are important! Personally I'm very interested in modelling the free energy landscape of ligands, I do this in my own research with molecular dynamics simulation, but probably not easy with Foldit at the current stage)